We're not starting with 'the dictionary definition of operationalized is…(like you were probably expecting) for two reasons. One, it's bad writing. And two, dictionary definitions put us in murky waters when it comes to topics like AI.

By the numbers, AI has generated more unrest and polarization than any other technology movement. That hasn't affected its adoption. In 2025, KPMG conducted a global study with 48,000 people across 47 countries to gauge the global impact of AI at the individual level. 66% reported using AI regularly, and 83% reported they believed in the full range of its potential benefits.

Only 46% reported they trusted the AI systems they were using.

If you don’t trust that your car is safe, are you still climbing in it every day?

So do 54% of those 48,000 people really distrust the systems they’re using? Or do they just lack a tangible understanding of them and label that uncertainty as distrust?

The second is likely the more accurate read. When we don't understand something, we reach for dictionary definitions and tangible explanations to make sense of it. But tying singular definitions to concepts that aren't singular is exactly how knowledge, competency, and expertise gaps get created. Knowing the definition of AI does little to support a working knowledge of how it functions.

Just like knowing how to use AI does little to support the ability to operationalize it (no matter what Webster's tells you).

The strategy gap (and how it leads to pilot purgatory)

In 2024, a Gartner report found that 85% of company AI initiatives never made it to production. In 2025, CIO reported that 88% of companies surveyed had invested in AI programs that never made it past pilot.

The data pools were different, and the industries and company types varied. Subject group variance doesn’t make the result less troubling.

Zoom in on insurance specifically: Deloitte's 2024 industry report found that 76% of US insurance firms had implemented gen AI in at least one business function, but only 10% achieved scaled deployment in any individual function. And in case you were wondering, 2025 didn’t move the needle.

It’s not an engineering problem. 84% of AI implementation failures are leadership-driven, not technical. That's not an indictment of leadership (entirely). It means there either wasn't a clear strategy in place, or the strategy didn't include a clear path to scale.

Among the barriers P&C carriers most commonly report: data quality and AI readiness, legacy architectures that can’t support real adoption, skill and resource constraints, regulatory hurdles, and fragmented initiatives that couldn't be centralized.

But AI initiatives demand substantial investment across time, money, and talent. So when one stalls, the first reaction isn’t, “This isn’t going as expected, so let’s take a step back to reassess where we’re headed.” Even when it should be. The instinct is to abandon the program in favor of a new tool, a flashy solution, or a fresh pilot, hoping for a different result without any fundamental shift in approach (what’s that saying about the definition of insanity?)

It all leads to a framework of scattered, fragmented, and redundant applications. None are meaningfully adopted, so none produce real impact. No impact triggers another round of abandonment, and around and around it goes.

The operationalization gap (and why it’s the key to seeing AI impact)

Deploying a triage bot, a standalone detection model, or a document analysis tool on top of your existing tech stack isn't operationalization; it's procurement. Point solutions can make a specific part of the claim process easier, but their impact starts and stops at that single function. If the tool is complex to use or doesn't fit naturally into how adjusters already work, it winds up sitting in a side panel and never becomes part of how the team actually operates.

The same goes for an 'AI suite' that lives in a separate system requiring a separate login. That's not operationalization, just another tab to toggle between. Every tool that lives outside your core claims system compounds as a coordination tax your team has to pay. Adjusters become air traffic controllers across a patchwork of platforms: copy from the AI tool, paste into the claim, verify the data matches, update the policyholder, log the interaction. That’s just adding steps. And if you're adopting AI to make adjusters' lives easier, it shouldn't be a shock when they opt out of tools that do the opposite.

Real operationalization means AI lives in the same place your claim data lives. It takes actions within the same workflows your processes are built on. It operates automatically with the context that your adjusters would otherwise spend hours digging for.

When AI is positioned as something you can 'layer on top of any claims system,' it's a tool, not an operational decision.

‘AI Assistants’ are… exactly what they sound like. They assist. They don’t

fundamentally support a better way of working.

The language matters because how you perceive what makes AI useful drives the decisions you make around it. Operationalized AI isn't built on short-term wins and vanity metrics. If those are the standards for success, purgatory awaits.

The Execution Gap (and what success actually looks like)

An overwhelming number of P&C carriers are still running on legacy systems that have been upgraded to death with very little to show for it. As AI became the new must-have technology, carriers repeatedly tried to attach advanced capabilities onto tech foundations that were never designed to support autonomous automation or real data intelligence. 'Build more integrations' became the rally cry instead of 'Rebuild the foundation.' The cracks in that methodology stay hidden for a while… usually until it’s time to move past the pilot.

Integration vs Infrastructure

The most common implementation pattern in the market today involves a separate AI vendor platform communicating with your claims system through API connections that pass data through orchestration layers.

APIs aren't the issue. The problem is what happens when you stack tool after tool, and orchestration layer after orchestration layer, on top of each other to make disconnected systems talk. It’s the start of the patchwork framework that plagues AI programs. At a certain point, data passing through that many layers stops being a connection and starts being a liability.

Adjusters end up owning the handoff: defining it, coordinating it, managing it. All of which creates risk that goes beyond added days on the claim lifecycle. Every time data moves from system to system and tool to tool, details get missed and errors compound.

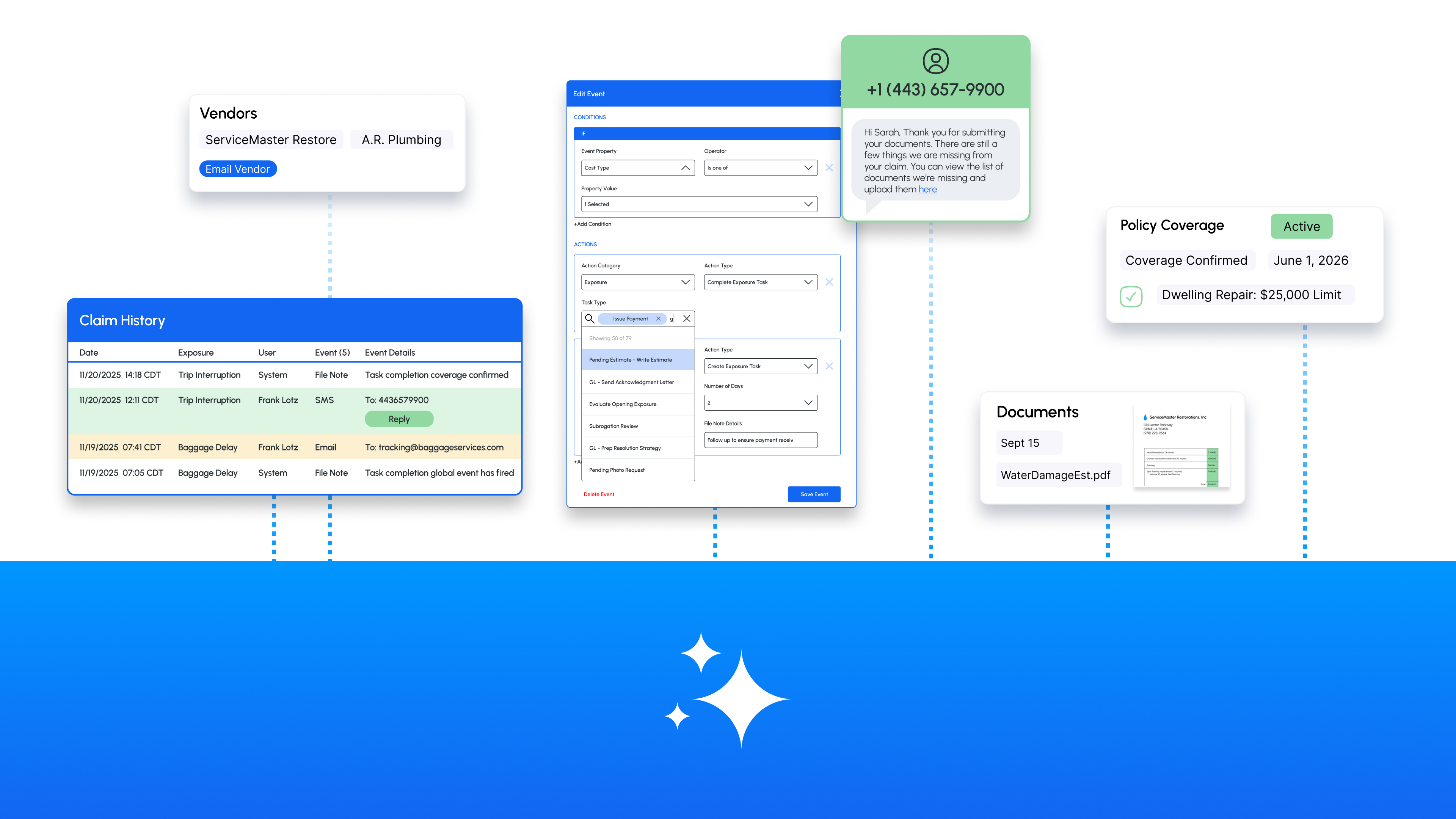

Infrastructure works differently. When AI is embedded into your claims platform's foundation, it operates with full claim context already in place across claim data, exposure details, history, communications, and documents, without requiring adjusters to shuttle between systems to make it useful. AI that’s natively accessible from within your claims system isn't another tab to manage. It's the same working environment, just extended.

Everything that makes the AI impactful is already there.

Autonomous and on-demand (not either/or)

Most point solutions make you choose. Either AI runs autonomously, or it's an assistant tool available on-demand. Pick one.

It’s a false narrative.

Real operationalized AI means both. It works in workflows without adjusters thinking about it to triage claims, throw red flags, and trigger actions based on claim events. And it's available on-demand exactly when and where adjusters need it. Claim view, side panel, documents viewer, and exposure screens.

Same AI, same infrastructure, just different deployment models.

Claims work isn't linear. Sometimes adjusters need AI to handle routine steps autonomously so they can focus on judgment calls, and other times they need AI as a co-pilot to help navigate a complex scenario in real time.

Operationalized AI has to mean both. Point solutions force you to choose, then tell you to buy another tool to cover the gap.

The results gap (and why measuring impact has to go beyond efficiency)

AI's impact on claims can't be measured against the same efficiency metrics the industry has always used. Limiting it that way creates blind spots to real value and leads to the wrong improvement opportunities, both of which consistently undermine AI strategies before they reach scale.

The execution variance problem

Your best adjuster closes claims in 12 days with 95% accuracy. Your average adjuster takes 28 days with 78% accuracy. Your new hire takes 45 days with 65% accuracy.

Same training and SOPs, wildly different outcomes.

The variance isn't about effort. It's about expertise embedded in over 10,000+ claims. Pattern recognition, knowing which red flags matter and which don't, and understanding when to escalate and when to close. Your best adjuster has developed intuition that can't be taught with a training deck.

And that expertise walks out the door when they retire. (25% of all claims adjusters are predicted to do so within the next five years).

Operationalized AI means equipping every adjuster, rookie or veteran, with the same embedded expertise to catch the same red flags and follow the same best practices. It's the decision scaffolding that raises the floor and the ceiling all at once. Speed and accuracy stop being goals you’re chasing and become structural guarantees built into how work gets done.

The other common problem with point solutions is that they scale linearly. Want more triage capacity? Buy more licenses. More claims volume? Add more seats. Growth requires proportional investment.

AI that’s operationalized scales exponentially.

So you’re not buying capacity, you’re building capability.

The sustainability gap (and how to keep moving your AI strategy forward)

If you can't see the prompt, adjust the model, or control the logic, you haven't operationalized AI. You've outsourced judgment.

Black-box solutions ask you to trust that the vendor's model works for your book of business. Maybe it does, maybe it doesn't. You won't know until you're months into a contract and the results aren't what was promised.

Truly operationalized AI puts you in control of that outcome, because you define how it behaves. You set the rules, adjust the prompts, and decide what’s effective for your workflows, your claim types, and your risk appetite. Control isn't a nice-to-have. It's the difference between AI that adapts to your operation and AI that forces your operation to adapt to it (or abandon it).

The insurance industry keeps conflating "deploying AI" with "operationalizing AI," and the gap is costing carriers speed, consistency, and control.

Deploying AI is easy. Buy a tool, run a pilot, put 'AI-powered' on a slide deck. Check that box.

Operationalizing AI is harder. It requires rethinking workflows, embedding capability into infrastructure, and ensuring AI doesn't just assist but becomes foundational to how work gets done. That level of lift is what keeps a lot of carriers stuck, particularly tech-forward ones that want to invest in building their own solutions.

The build vs. buy question isn't new in claims, but AI has changed the conversation. A 2025 MIT study found that internal AI builds succeed only 33% of the time, while vendor solutions succeed 67% of the time.

But that entirely depends on the vendor. Are you buying infrastructure, or just another point solution? Are you getting operationalized AI where the heavy lift of development, security, data management, and implementation is already accounted for? Or are you attaching a siloed function that, at the end of the day, is just another tool?

Because the industry already has plenty of those.

Snapsheet AI is a fully configurable AI infrastructure integrated directly into your Snapsheet Claims platform to put AI actions at your fingertips across the claim lifecycle. The native integration removes the heavy technical lift of development, security, data management, and implementation that point solutions demand to support operationalized AI out of the box on day one.

Launching June 2026.